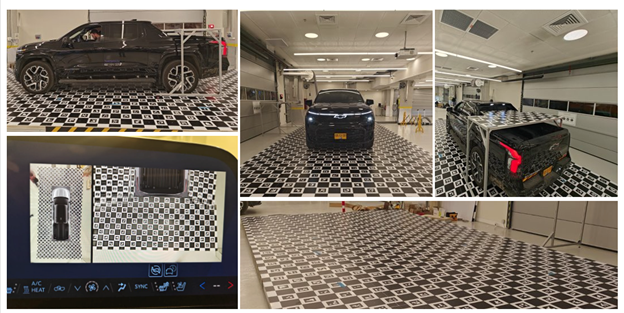

GM is using a vehicle-anchored geometry platform to scale camera-based driver assistance features across multiple vehicle models

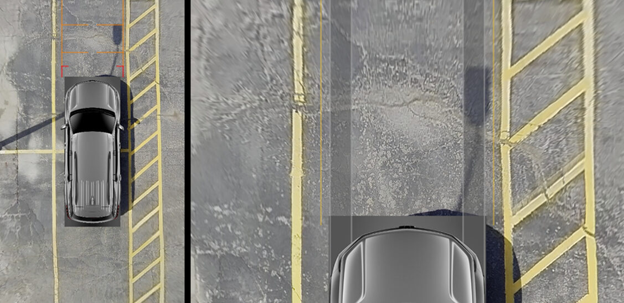

General Motors has detailed the camera intelligence stack underpinning its Top-Down View and Transparent Trailer driver assistance features, built on a unified vehicle-anchored geometry platform shared across multiple models and camera layouts. The automaker said the architecture is designed to scale across future surround-view and trailering features without requiring camera-specific software logic.

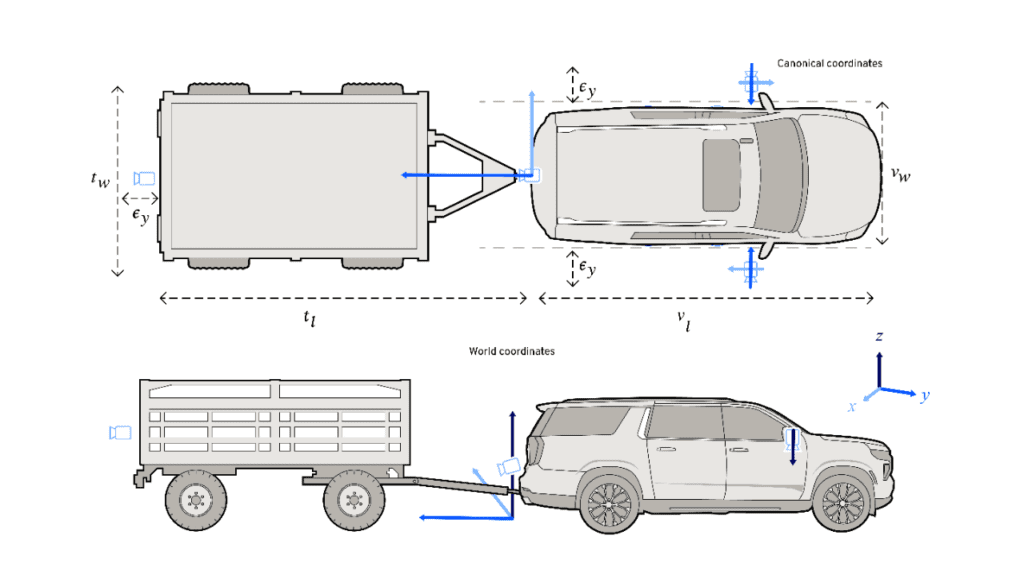

The stack treats vehicle cameras as geometric sensors rather than passive image feeds, projecting wide-angle camera pixels into a common rear-bumper-ground coordinate frame. This allows the system to reason consistently about curbs, lane edges and obstacles regardless of which camera observed them, and to maintain stable composite views as the vehicle moves.

A key element is what GM terms Online Alignment (OLA), which continuously refines each camera’s extrinsic calibration parameters during normal driving rather than relying on factory calibration alone. The automaker noted that orientation errors of less than 0.1 degrees are enough to produce visible seams and misalignments, with mounting tolerances, suspension travel, load changes and temperature shifts all introducing drift over time.

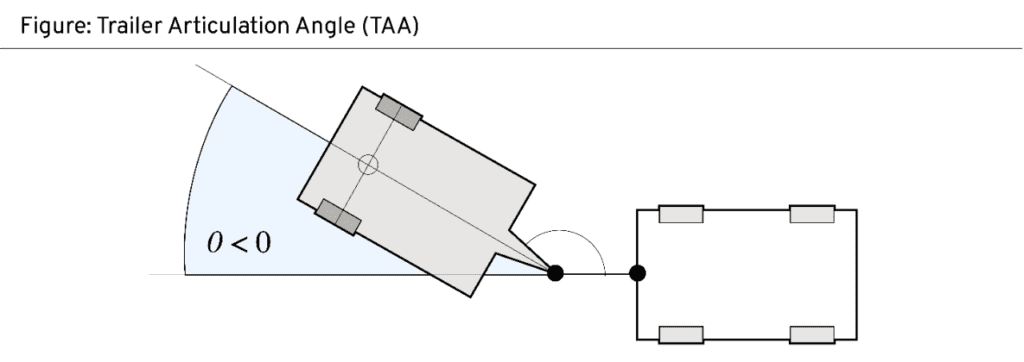

For Transparent Trailer, the system fuses streams from a rear vehicle camera and an accessory rear trailer camera, reconstructing occluded regions in real time using trailer articulation angle data updated approximately every 33 milliseconds. The output is rendered in the rear vehicle camera’s perspective using a hybrid CPU/GPU pipeline.

GM said the architecture also supports augmented-reality overlays anchored in real-world 3D coordinates.

Source: GM

Autonomous Driving,News,OEMs,Software-Defined Vehicle,General MotorsGeneral Motors#details #unified #camera #stack #driver #assist1776449309

More Stories

Pony.ai, CATL partner on first L4 electric light truck

UK lays regulations for automated passenger services

Leapmotor reveals China-only B05 Ultra at Beijing show