Autonomous vehicle developers may be sharing safety data, but the comparisons are far from transparent. By Megan Lampinen

Autonomous driving (AD) proponents have presented their solutions as the key to improving road safety. A computerised system is never drunk, drowsy or distracted, and hence inherently more safe than the average human driver. That’s the promise, but where’s the proof?

Specifics vary by market, but AD systems are generally evaluated within a defined operational design domain (ODD), where safety is demonstrated under specific conditions. Benchmarks like fatalities per 100 million vehicle miles travelled or disengagement rates provide important context for evaluation. Waymo is among the more transparent when it comes to releasing crash and miles-driven data. In an interview with Bloomberg, David Kidd, Vice President for vehicle research at the Insurance Institute of Highway Safety, described the company as “the standard for data sharing.” And some of that data certainly looks good.

“We conduct extensive safety comparisons between human and automated driving utilising peer-reviewed methodology and retrospective analyses, which we then report to the federal government,” states Waymo. The Waymo Driver has been involved in ten times fewer ‘serious injury or worse’ crashes, 12 times fewer injury-causing crashes involving a pedestrian, five times fewer crashes with airbag deployment, and five times fewer injury-causing crashes compared to human drivers covering the same mileage in the cities where it operates, on the same road types and in the same conditions.

That last bit is important. “Comparisons are highly dependent on geography, traffic, environment, and diverse regulations,” notes Suraj Gajendra, Vice President of Products and Solutions at Arm’s Physical AI Business Unit. The company provides the foundational compute behind AI-powered systems and has worked closely with AD system developers.

Waymo has also worked with reinsurance company Swiss Re to highlight the insurance benefits of its system. Based on data from Waymo’s first 25 million fully autonomous miles, the partners concluded that the Waymo Driver demonstrated reduced property damage claims by 88% and bodily injury claims by 92% compared to human drivers.

But here is where there’s room for confusion: “There is currently no single universal method for measuring a human baseline,” says Gajendra. In the real world, every human is different, and studies consistently show that human drivers overestimate the safety of their behaviour behind the wheel. Javier Ibañez-Guzmán, Corporate Expert on Autonomous Systems, Renault Group, refers to “the myth of the average driver” as a development headwind, and it applies to everything from driving style to design preferences.

In the UK, a “careful and competent driver” is the legal standard representing a motorist who exercises reasonable skill, attention, and consideration for other road users. The UK’s Automated Vehicle Act stipulates that the Secretary of State for Transport must publish a Statement of Safety Principles ensuring authorised self-driving vehicles “are as safe as, or safer than, competent human drivers.” But that’s as defined as it gets.

“There is no universally agreed ‘careful and competent driver’ standard,” echoes Tom Leggett, Vehicle Technology and Research Manager at Thatcham Research, a UK-based specialist in automotive risk intelligence. “Real-world safety metrics vary enormously depending on region. Are you comparing by region, by vehicle type, or by type of driver—professional taxi driver, HGV license holder, etc? ‘Careful and competent driver’ could mean a lot of things.”

A spokesperson for Partners for Automated Vehicles Education (PAVE) concedes that “the ‘average human driver’ is itself an imperfect benchmark.” They note that developers generally use a variety of reference points depending on different factors, including the vehicle type and where and how they intend to operate, so comparisons are not always apples-to-apples. PAVE encourages a more nuanced view: rather than a single human baseline, it suggests safety assessment combine real-world data, simulation, testing, and transparent reporting. “Ultimately, the goal is not simply to match human performance but to increase roadway safety meaningfully,” the spokesperson tells Automotive World. “Clear and accessible public education about how safety is measured is also essential to building understanding and trust as the technology evolves.”

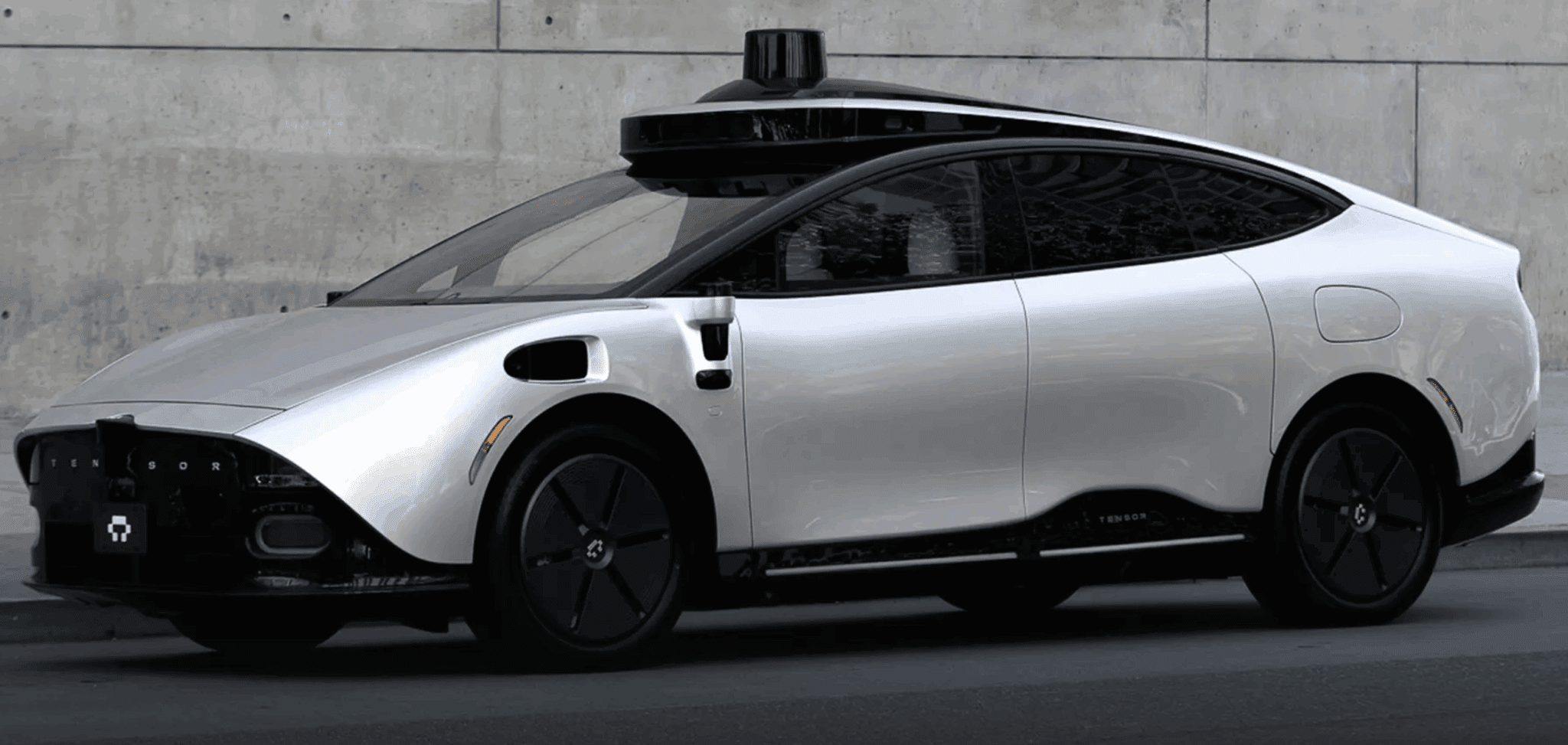

Tensor, the California-based developer of the upcoming SAE Level 4 Robocar, highlights its Safety Case Framework as a “pledge to the public that safety is not merely a priority but a fundamental principle ingrained in every facet of our operation.” Using the Claims, Arguments, and Evidence (CAE) notation, the Safety Case lays out statements about the safety of the system or its components, with arguments showing the logical reasoning that connects the claim to the evidence. The evidence consists of objective, verifiable information that supports the claims and arguments.

“The industry is converging on a standardised Safety Case approach rather than relying on a single performance metric,” explains a company spokesperson. “Developers use a mix of approaches: normalised naturalistic driving comparisons, scenario‑based testing matrices, root‑cause incident taxonomy, simulation coverage metrics, and safety case arguments aligned with functional safety principles.”

One of the big problems with all these approaches is the source of the safety data. “It all comes from the developers themselves,” emphasises Leggett. “Waymo is constantly publishing reports, and it does a lot of good when it comes to that level of transparency. But ultimately it’s hard to build trust when all the data comes from people selling the AVs.”

Meanwhile, some safety agencies are calling for a government- or agency-led safety verification for AVs, which could help address consumer confidence. “The overarching aim is to prove that AVs won’t make road safety worse,” concludes Leggett. “But how do you do that when you only have data from the company’s own testing, showing its own point of view?”

The next couple of years will see AV deployments gain momentum across the US, China and Europe, but with no clear-cut safety comparison, success or failure could prove a matter of marketing more than engineering.

Articles,Autonomous Driving,Megan LampinenMegan Lampinen#AVs #humans #safety #comparisons #standard1775119335

More Stories

Pony.ai, CATL partner on first L4 electric light truck

UK lays regulations for automated passenger services

Leapmotor reveals China-only B05 Ultra at Beijing show